ARMAND G. THOMPSON

WHITEFIELD––Armand Gene Thompson, 70, of Whitefield, known as “Pop,” “Pepe,” and “Phil” to his family and friends, passed away on Tuesday, October 24, 2017, following a brief and courageous battle with cancer. He was born on July 30, 1947, in Clinton, Massachusetts, to Lucille and Armand Houle.

He spent 40 years growing up, working, and raising a family in Massachusetts. When he was 12, he became the proud big brother to his sister Debbie.

Phil joined the Army in 1967 and went to Korea, where he worked in a dental clinic and nurtured a lifelong passion for teeth and the medical field. When he returned stateside, he worked in a book bindery, and later Fort Devens.

In 1972, he met the love of his life and best friend, Darlene. In 1974, they married and raised two boys. They moved to Whitefield in 1987 to be nearer to family. Phil worked at Togus V.A. for 30 years.

Phil enjoyed working in his yard, building flower gardens, listening to records, hosting dinner parties, creating art, and spending time with his family.

Most of all, he was a deeply spiritual man.

Phil was predeceased by his parents, Lucille and Armand Houle; and his stepfather, Robert Thompson.

He is survived by his wife, Darlene; his sons, Jesse and Silas and their wives Junko and Jenny; his grandchildren, Donovan and Theodore; his sister Deborah Matthews and her husband David; his niece and nephew, Desiree and David; as well as many members of his extended family.

Memorial donations may be made at the Kingdom Hall, 8 Cross Hill Road, Augusta.

ELIZABETH E. FISHER

FAIRFIELD––Elizabeth E. Fisher, 74, passed away on Wednesday, October 25, 2017, at her home, in Fairfield. She was born on October 15, 1943, in Waterville, the daughter of Elwood and Evelyn Folsom.

Elizabeth worked at a chicken plant, a shoe shop and the Cascade Woolen Mill, in Oakland.

She loved to crochet and go shopping. Some of Elizabeth’s other hobbies included putting together the family tree, spending time with her granddaughter and scrapbooking. Elizabeth made several family scrapbooks. She also enjoyed watching Hallmark Christmas movies, as Christmas was her favorite holiday. Elizabeth prayed daily.

Elizabeth is survived by her husband, William Fisher, of Fairfield; her three children, Scott Fisher, of Waterville, William Kelly and Amanda Kelley, of Fairfield; granddaughter Adrianna Fisher, of Waterville; sister Diane Mushero, of Fairfield and many more family members.

An online guestbook may be signed and memories shared at www.lawrybrothers.com.

JOHN W. WITHAM

WINDSOR––John Wesley Witham, 40, of Windsor, passed away unexpectedly in his sleep on Saturday, November 4, 2017. He was born February 11, 1977, in Boothbay, to Belinda Howes Trundy and George Witham.

John grew up in New Harbor. He enjoyed spending time with his family and friends, going to the local car races, sailing, and fishing. In his spare time he liked to read. He had many different jobs in his life–he loved working at the Seagull Restaurant in New Harbor and loved to cook.

John was predeceased by his mother, Belinda Howes Trundy and father George Witham.

John is survived by step-father, Don Howes; wife, Michelle Witham; sons, Wesley Witham and Cole Witham, from a previous relationship; son John Witham Jr. and his two children; sister, Deride Albert; brothers, Timothy Pendergast and Virgil Cray; brothers-in-law; sisters-in-law; aunts; uncles; nieces; nephews; and cousins.

Memories and condolences may be shared at www.directcremationofmaine.com.

DONALD W. TRUSSELL JR.

WHITEFIELD––Donald Wilson Trussell Jr., 72, of Whitefield, passed away Monday, November 6, 2017, at the Augusta Rehab Center, Augusta. He was born February 3, 1945, a son of the late Donald Sr., and Emily L(Emerson) Trussell.

He graduated from Gardiner Area High School, class of 1964.

He was a Connecticut resident from 1967 to 1998 when he returned to Maine. He was a retired mechanic and an avid drag racer for many years. He enjoyed fishing and hunting, and restoring a 1950 International Harvester truck. Donnie was a member of Gassah Guys Racing Club, and a tech guy at Winterport Dragway for many years.

Donnie is survived by daughters, Dawn Donnelly and husband Michael, of Durham, Connecticut, and Vicki Bird and husband Michael, of Deland, Florida; grandchildren, Marisa Doyon, Lauren Donnelly, and Zachary Bird; siblings, Joan Marston, of Gardiner, Nancy Jewett and husband Curtis, of Pittston, Louise Yeaton and husband Francis, of Richmond, Stephen Trussell and wife Debbie, Brian Trussell and wife Lucille, and Wayne Trussell and wife Kathy, all of Pittston; several nieces, nephews, aunts and uncles.

DONALD LACROIX

FAIRFIELD––Donald LaCroix, 83, passed away Tuesday, November 7, 2017, at MaineGeneral Hospital, in Augusta, following an illness. Donald was born on July 1, 1935, in Waterville, to Cyril and Yvonne LaCroix.

Donald attended St. Francis de Sales Catholic school and shortly after graduation he joined the U.S. Air Force. He was very proud of the time he spent in the Air Force.

He married Edna Thompson on August 22, 1959, and they shared many happy moments. They lived in Fairfield where Don was a member of the Fairfield Grover-Hinckley American Legion. Don loved his work as a mechanic and worked at Woodbury Motors, Firestone Garage, and at Al’s Sunoco. He did have to take an early retirement due to health issues.

Don was predeceased by his parents; a brother Larry, from Waterville, a twin brother Ronald, from Texas, a sister Rene Whittish, from Waterville, and his wife Edna.

Don is survived by his daughter Evelyn Knights, of Fairfield, and his son Kevin and his wife Janet, and their three daughters Tamika, Kaitlin, and Chantelle LaCroix, all from Solon; and several nieces, nephews and cousins.

Don was very proud of his three granddaughters and loved spending time with them.

He enjoyed putting puzzles together, family outings and family BBQ’s, just to name a few things.

ROLAND G. PAQUET

OAKLAND––Roland Gerald Paquet, 78, passed away on Wednesday, November 8, 2017, at Mount St. Joseph Residence and Rehabilitation, in Waterville. Roland was born February 17, 1939, in Bath, to Zenon Michel Paquet and Alice Antoinette Croteau Paquet.

He had patiently endured many physical hardships and challenges over the past six years, and in the process continued to touch many lived for good.

He attended St. John Catholic School, in Winslow, graduated from high school in Brunswick, and served in the Army National Guard for nine years.

He worked in the grocery business and for Bath iron Works, and later managed the delicatessens for what is now the Hannaford chain of supermarkets. He retired from Kraft General Foods, now Kraft/Heinz, after being a top sales representative and merchandiser for that company for many years. He made many strong friendships along the way, and was well-liked everywhere he went.

Roland was a member of Church of Jesus Christ of Latter-day Saints. He and his wife, Kelly, also served an 18-month mission for the church in Minnesota and Canada––the Canada Winnipeg Mission.

Roland was quick to see the humor in any situation, or even to create it. He saw every stranger as a potential friend and it was easy for him to talk with anyone. Roland taught money management skills at no cost to anyone who asked. He was wise, creative, and practical, and many skills. He was handy, and willing to tackle building or fixing almost anything, and put his skills to work many times to help others or to beautify his family’s home. He enjoyed any chance to play games with friends, eat good food, take rides to the coast, go to the movies, to the country fairs, and to visit friends and family, and even to visit people he had just met. He often went with the missionaries in the area to teach and help other people. He enjoyed learning and reading from the scriptures,k and loved to share what he knew to be true.

Roland was predeceased by his granddaughter, Meghan Passey.

He is survived by his wife of nearly 27 years, Kelly Maureen Abbott Paquet; his sister, Diane Kachmar and her husband Jack; his brother, Robert Paquet and his wife Cyndi; Roland’s children, Louis Paquet, James Paquet, Julie Passey, Dona Guertler, Kristin Jones, Holly Adams, Amy Paquet, and Alaina Hastings, and their spouses and children; and his stepchildren, Kristi Morgan Whiting, Kevin Morgan, Cameron Morgan, and Colin Morgan, and their spouses and children.

O’NEIL CARPENTER

OAKLAND––O’Neil Earl Carpenter, 88, died at home on Friday, November 10, 2017.

O’Neil was the eldest son of the late Arthur and Violette (Lachance) Carpenter. Neil, as he was known to family and friends, was born October 20, 1929, in Waterville, the week of the great stock market crash. This historic event shaped his hard working character and strong fiscal accountability. Yet he never hesitated to support his church, favorite children’s charities and family when needed.

Neil attended local area schools and was a member of St. Joseph’s class of 1945. He enlisted in the Army and served in Europe during World War II.

In his early years he worked for his father’s construction business, A. J. Carpenter & Sons, building many area homes. After leaving his father’s business he worked for more than 45 years for Logan & Sons, a Portland-based painting contractor. Logan & Sons was a generational family owned and operated company. Neil was their generational family employee.

Over the years he worked alongside his brothers, all of his sons, grandsons, nephews, even his second wife. It was a regular family affair.

Neil was not big in stature but was large in life. He was known for his charming personality, storytelling, and sense of humor. In his earlier years, he enjoyed getting together with his family to listen to his sister Marlene sing or going out to “Uncle Don’s camp,” having a few beers and playing horseshoes. He enjoyed his son Timmy’s singing and playing guitar and discussing all things sports with his youngest son Jason, especially watching the Patriots play and enjoying a shot of cognac. The collection of old wheat pennies, change in old bottles and the endless supply of silver or gold dollars was handed out to all kinds at Christmas.

Neil was predeceased by his parents; brothers, Donald and Raymond; his sons Robert, William Timothy and Jason O’Neil; and his first wife, Lillian (White).

He is survived by his daughters, Jacqueline Sweigart and husband Chuck, Violet White-Carpenter, of Oakland, and Jodi Jones and husband Will, of Vassalboro; sons, James O’Neil, of Waterville, Peter Boudreau and wife Jennifer, of Oakland, David Boudreau and his wife Jessica; exwife Judith Westman, of Lewiston; brothers, Arthur (Biz), of Fairfield, Alfred and Danny, of Waterville; sisters, Marlene Wincapaw, of Clinton, and Diana Nadeau and husband Ronald, of Oakland; ten grandchildren; 13 great-grandchildren; and many nieces, nephews, and cousins.

Please visit www.veilleuxfuneralhome.com to view a video collage of Neil’s life and to share condolences, memories and tributes with his family.

ANDREW L. HISLER SR.

SOMERVILLE––Andrew LeRoy Hisler Sr., 85, passed away at Maine Medical Center, Portland, on Thursday, November 16, 2017. Andy was born December 4, 1931, in Somerville, to parents Randolph and Eleanor Falconi Hisler.

He proudly served in the Army in the Korean War. As a young man he was gainfully employed by Lipmans Poultry, Hillcrest Poultry, Tank & Culvert, Lee Brothers Construction, and retired from BM Clarks.

Andy loved his family, riding on his tractor, and doing various odd jobs. He always enjoyed visiting with family and friends, going out to eat with his wife Joyce, and exploring the back roads of Maine. In his later years, he enjoyed camping in Jackman.

Andy was predeceased by his parents; wife Marilyn Hawes; second wife of 40 years Arlene (Tina) Light Hisler; two brothers, Stanley Hisler and Leon Routh; two sister, Beatrice Routh and Mary Cobb; a step-son, Melvin Light, and son-in-law, Dana Chase.

Andy is survived by his wife, Joyce, of 24 years; his children, Marilyn Crochere and husband Joey, of Chelsea, Joan Patrick and husband Mark, of Ohio, Randolph Hisler and wife, Colleen, of China, Andrew Hisler and wife Janice, of Somerville, Leon Hisler and companion Paula, of Somerville, and Bernice Chase and companion, Al, of East Winthrop; his step-children, Travis Deblois and wife Berdina, of Winthrop, Pete DeBlois and companion Wendy, of Winthrop, Patsy Hatch, of Dixfield, Gene Hatch, of Winthrop, Dorothy St. Hilalire and husband Kevin, of Winthrop; six grandchildren; 12 step-grandchildren; and 12 great-grandchildren.

There will be a graveside service on Saturday, November 25, 2017, at Sand Hill Cemetery in Somerville at 1 p.m.

Memorial contributions may be made to Kennebec Valley Humane Society, 20 Pet Haven Lane, Augusta ME 04330.

OTHERS DEPARTED

ODWAY SIMMONS, 79, of Nobleboro, passed away on Saturday, October 14, 2017. Locally, he is survived by a sister, Cathreine Rolerson, of Jefferson.

ANNE M. SIMMONS, 78, of Augusta, passed away on Monday, October 16, 2017, at Alfond CVenter for Health, in Augusta, following a brief illness. Locally, she is survived by a daughter, Dawn Teed and husband David, of Vassalboro.

SUSAN M. LEEMAN, 94, of Freedom, passed away on Thursday, October 26, 2017, at Oak Grove Center, in Waterville. She graduated from Walker High School, in Liberty, class of 1941. Locally, she is survived by her daughter Beverlee J. Tibbetts, of Jefferson, and son and his wife Gary and Sharon Leeman, of Palermo; six grandchildren; several great-grandchildren and great-great-grandchildren; her brother-in-law, Bruce Leeman, of Palermo, sister-in-law Esther Mathieson, of Montville, and a large number of nieces and nephews.

RAYMOND ROBITAILLE JR., 55, of Skowhegan, passed away on Wednesday, November 1, 2017, at MaineGeneral Medical Center, following a long and courageous battle with cancer. Locally, he is survived by a daughter,Amber Sellers, of Oakland, and siblings Billy Bragg, of Fairfield, Stacy Bragg, of Waterville, and Liza Holt, of Unity.

FLORIENCE P. KENNEDY, 93, of Farmington, passed away on Wednesday, November 8, 2017, at her home. Locally, she is survived by a brother, William Bell,of Benton.

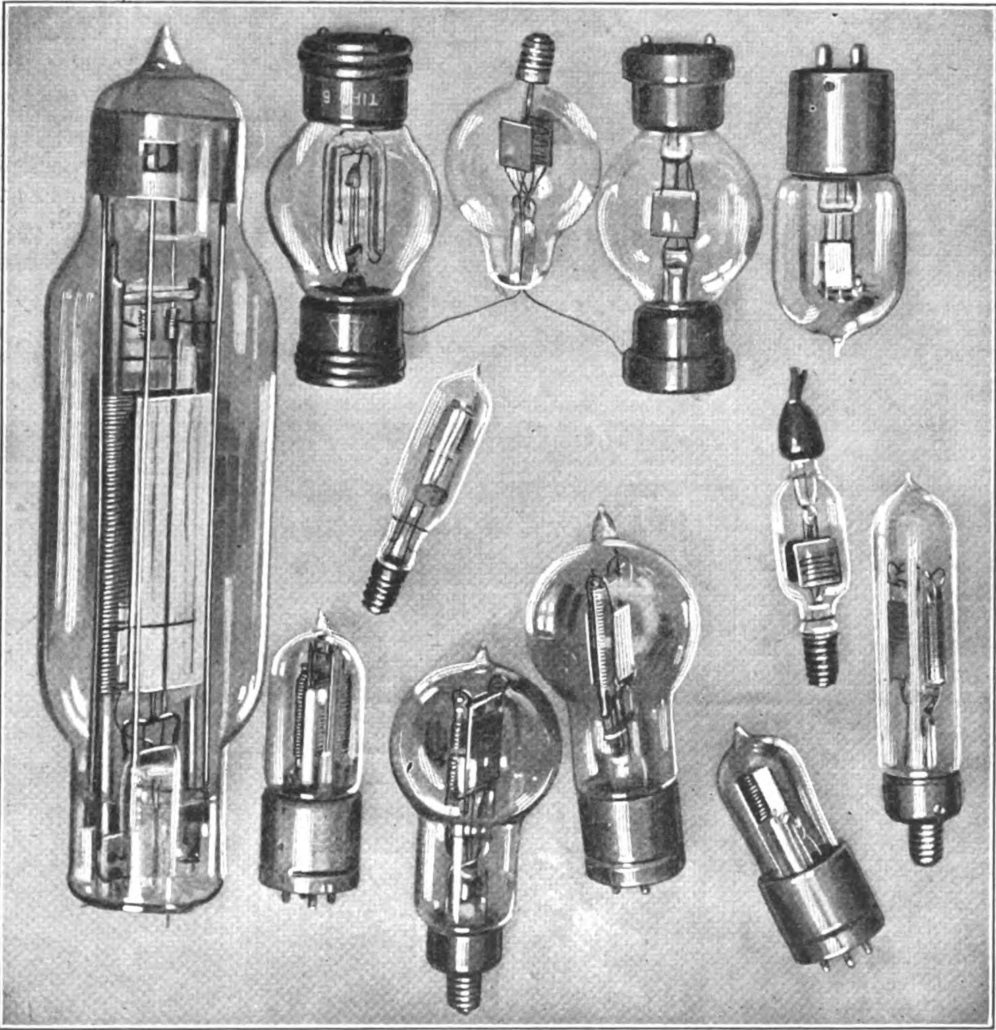

ERIC’S TECH TALK

ERIC’S TECH TALK

For Your Health

For Your Health